WHAT THE WEB CAN LEARN FROM THE SOCIAL BRAIN

Remember the early days of social networks and all the hype around Web2.0, “the social web”? What started out as a form of online activity has quickly taken over the digital world by such storm that by now it is an inherent part of it. With Facebook’s well over a billion users and smartphones in everyone’s pockets, people around the world are playing more social games, uploading more content and interacting with each other and with products on the web in an ever-increasing rate. So much digital noise around us, it really is getting harder and harder to follow, let alone find a haven of peace and quiet amidst all this noise.

So what has the brain got to do with it?

With current brain imaging techniques, researchers can track what parts of the brain are active while doing anything from watching a movie, to being voted the weakest link. In recent years, brain studies have surfaced an unexpected finding that has been rattling the neuro / psycho-logy world and has lead to the emergence of the new field of Social Neuroscience. Basically what brain scientists across different disciplines have discovered is how central social thought is to the human mind. When you think about it, it makes perfect sense. The fact that humans can collaborate with each other is what enabled us to build (and sadly also to break) great things along the history of humankind.

Without knowing what’s going on under the brain’s hood, you’d think the social nature of people is made possible simply by people talking to each other. But the social brain goes far beyond language. Language may be the direct visible channel between people for sharing ideas, emotions and instructions, but by no means is it the only, or even primary, one. Truth is, language accounts for a small fraction of human communication. The vast majority comprises of other forms of non-verbal communication that require special mechanisms in our brains. Those mechanisms are dedicated to deciphering what’s going on in the other person’s brain. We all have to be mind readers, if you will. The “social brain” is a set of specialized brain regions doing exactly that – decoding the mental states in the brains of people around us.

The social brain has three unique characteristics that make it so intriguing. First, it is “loud” by default. It constantly chatters in its own language – neural activity (neurons “firing” electric signals). As opposed to the rest of the brain, the social brain regions are the only ones that don’t reduce their activity in rest. When a person simply lies inside a brain imaging monitor (fMRI machine) without doing anything, the entire brain becomes less active. The entire brain, apart from the social regions. In other words, we have an innate tendency to view stuff around us as social organisms. Think of how we personify everything – from gadgets to buildings, we tend to view the world as having a soul: feelings and wants and needs. That’s because the social brain doesn’t normally shut down.

And what about the social web? So much has been written about the noise it’s causing and the ADD generation we’re growing, that I won’t even get into it. From facebook to twitter, commenting to liking – the social web is everywhere, constantly loud, constantly affecting us. But it’s not all bad. If you look at noise in neural networks, noise is constantly there, and it can create progress and help overcome problems inherent to the system. Projecting that back to the online world, I’d argue that this noise in our collective social brain leads to bursts of innovation and creativity.

Another unique characteristic of the social brain is the existence of mirror neurons. These really are one of the most intriguing phenomena in the brain (and certainly one of the most controversial). Mirror neurons are, as their name suggests, neurons that mirror other neurons activity. Which neurons? Neurons in the brain of the person you’re interacting with. When someone you’re talking to is extremely happy – not only can you hear it in the ecstatic tone of their voice, or see it in their smile, your very neurons “feel” it and mirror the “happy neurons” in the other person’s frontal lobe. In other words, social brain activity is infectious.

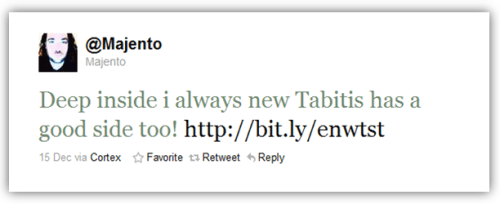

And where are mirror neurons in the web? For that we’ll have to define the equivalent of a neuron, a task I’m not quite ready to take (help, anyone?). However, the results of the mirror neural activity are quite apparent to me. What are memes if not infectious social behavior? What is virality, one of the most distinctive characteristics of the web, if not people echoing others’ behavior, thoughts, emotions? As memes spread through the social web, social brain activity can infect others and spread through the collective social brain.

In order to compensate for being constantly loud, the social brain has another unique characteristic without which we could hardly survive. The social brain turns itself down when something else is being computed. You’d hardly want to start personifying functions while trying to solve math equations now, would you? So when you’re trying to calculate something or focus on a hard problem, the social brain realizes it would only be interfering and therefore shuts down.

But what turns off the “social web”? Fact is, we stopped calling it the “social” web because the social layer has become as invisible as it is omnipresent. The noise is now everywhere. So how should the web turn down the volume when we’re doing other stuff? Our biggest problem is, there is no specialized mechanism inherent in the system, as there is in the social brain. Some people believe the problem isn’t technology. It’s us. But expecting people to cut themselves off is as good as expecting a neuron to stop firing. We are inherently designed to be drawn, to react.

Until a time where the shutting of the noise is built into the system, it really is up to you to turn it down. So turn off your phone when you’re having dinner, go outside more. The sun is shining, birds are chirping. Technology has still not taken complete control of our lives.